Recommending an i7 for gaming is probably the biggest scam currently running in PC gaming. For anyone building a PC, unless you really want one, you do not need an i7. The highest i5 (which would cost about $100 or more less than an i7) of any given pin is perfectly fine.

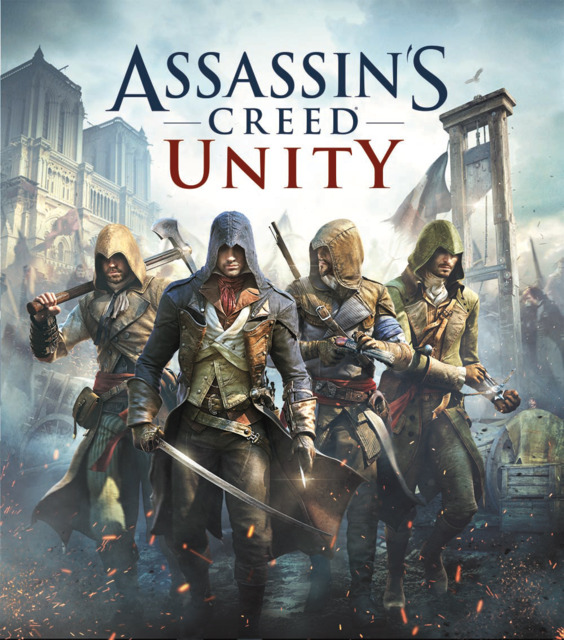

Assassin's Creed Unity

Game » consists of 13 releases. Released Nov 11, 2014

The Assassin's Creed series heads to Paris, France, amid the French Revolution. The player controls Arno Dorian, an Assassin, as he attempts to disrupt and destroy the true powers behind the Revolution.

System requirements released...oh boy

@anywhereilay: like SoM? meaning anything decent (my rig 2500k +gtx560) can run it on mid-high. I feel these "recommended" spec are more like bragging rights these days.

@rorie: The point is that they won't have the graphical fidelity to justify the large resource use, like watchdogs. Which just shows terrible optimization on their part. Or they just fluff up the minimum reqs because they can. Nothing shown justifies these requirements, and I highly doubt anything will.

Yeah you might be forgetting that they're rendering 5.000 people on screen, compared to some 250 in the previous games. Yeah, that's gonna take some power to realize. I don't think you'll really be able to appreciate it before you see it.

@rorie: The point is that they won't have the graphical fidelity to justify the large resource use, like watchdogs. Which just shows terrible optimization on their part. Or they just fluff up the minimum reqs because they can. Nothing shown justifies these requirements, and I highly doubt anything will.

It's worth noting that Watch Dogs is still technically a cross-gen game, however, whereas Unity is specifically only coming out on PS4/XB1 and PC.

Not worring about this stuff is why I love console games. PC master race my ass. Fight me computer geeks!

But in all seriousness it seems like most companies just are not even trying to optimize . Maybe sales for new games on PC are so not worth it they don't even think about it

Maybe Ubisoft is still paranoid about piracy, so on top of having their asinine DRM client, so actively decide to not optimize / put any work into their PC releases to try and drive sales to consoles? Might be trying in scaring people into getting it on PS4 or Xbox One.

Wouldn't put it past them.

I would guess that the game will run okay, for people below the minimum that don't have ancient rigs.

@corevi: Short answer: Yes.

I only got Ass Creed 2 and Brotherhood running at a steady 60 with no stutters when I bought a 780. Didn't they used to farm out their PC ports to one of their small studios? Ubi Kiev or someone.

What kinda annoys me about these kind of requirements is that a lot of these games don't even really look much better than the stuff we've been playing for years now...so like, why do we need so much more " horsepower " to run them even on lower settings, where they actually look worse than some of the things we've been playing for years now?

There's probably a good reason for it, I dunno.

People should stop buying into this stuff. It's just marketing making the game seem super impressive, even though it tends to have the opposite effect.

And also Ubisoft being not so good at porting to PC.

Oh boy, regretting buying it early now...well I think I should be able to get by at min specs I suppose.

What people need to keep in mind is that this game will run at 900p and 30FPS on the consoles. The last time we had this freakout (The Evil Within) the game couldn't even hit 30FPS on the new consoles. Shadow of Mordor before that was for some extra settings way above what the consoles used. This game will probably require an insane PC to max out at 60FPS and 1080p. However matching the consoles, with probably a slightly smoother framerate and higher resolution, probably won't be that hard. Of course it could still be that it is a terrible port, but people have made a habit of overreacting to this stuff recently. I would most likely not recommend people to pre-order it though.

Since this is Ubisoft I wouldn't be surprised if components lower than the minimum requirements would run the game better than the actual recommended requirements.

The only system capable of hitting 1080/60 is the Commodore 64

I call bullshit. If I went purely by the posted system specs, my computer wouldn't meet the minimum requirements to run Shadow of Mordor. Instead I can run at a mix of medium/high settings and hit 60 fps most of the time. It probably helps that I have motion blur off, but I think motion blur effects look terrible in just about every game.

Ubisoft has a spotty track record, but I doubt the game will straight up not run if you have less than what has been posted.

Where the hell are all these several gigabyte VRAM specs coming from? First time I ever saw it was for Shadow of Mordor, have I just been out of the loop? Are there even cards with more than 3GB of VRAM apart from the ones that cost like a car?

I have a GTX 770 with 4gb of vram. It was new last year, and cost 430 bucks. Good investment, all told.

Suprised at how low the CPU and RAM requirements are, i've had those for years, but the gfx card ones are pretty damn high, hopefully it just means turning down texture resolution to high and reducing the shadows lets it run well but you never know, Ubisoft's PC ports are totally random in quality these days.

It's sort of crazy that even a GTX 770 doesn't meet the recommended specs.

My card as well....... I'm calling BS until i see how it runs.

Where the hell are all these several gigabyte VRAM specs coming from? First time I ever saw it was for Shadow of Mordor, have I just been out of the loop? Are there even cards with more than 3GB of VRAM apart from the ones that cost like a car?

Was always going to happen with the new consoles actually having some humane amount of memory, assets were simply going to get bigger (or more to the point catch up). There are plenty of 4GB cards on the market that are more humanely priced like the R9 290 or the custom versions of the 270X, GTX760 and GTX770. Personally I've suggested people who don't upgrade regularly to spend a little more pick up cards with more memory for quite a while now since this was always going to happen.

Where the hell are all these several gigabyte VRAM specs coming from? First time I ever saw it was for Shadow of Mordor, have I just been out of the loop? Are there even cards with more than 3GB of VRAM apart from the ones that cost like a car?

Was always going to happen with the new consoles actually having some humane amount of memory, assets were simply going to get bigger (or more to the point catch up). There are plenty of 4GB cards on the market that are more humanely priced like the R9 290 or the custom versions of the 270X, GTX760 and GTX770. Personally I've suggested people who don't upgrade regularly to spend a little more pick up cards with more memory for quite a while now since this was always going to happen.

The base versions of the 970 and 980 also come with 4GB of VRAM.

Where the hell are all these several gigabyte VRAM specs coming from? First time I ever saw it was for Shadow of Mordor, have I just been out of the loop? Are there even cards with more than 3GB of VRAM apart from the ones that cost like a car?

Was always going to happen with the new consoles actually having some humane amount of memory, assets were simply going to get bigger (or more to the point catch up). There are plenty of 4GB cards on the market that are more humanely priced like the R9 290 or the custom versions of the 270X, GTX760 and GTX770. Personally I've suggested people who don't upgrade regularly to spend a little more pick up cards with more memory for quite a while now since this was always going to happen.

The base versions of the 970 and 980 also come with 4GB of VRAM.

True but he wanted cheaper examples ;)

Fucking assholes! I just bought a god damn 770! And it doesnt meet recommended! Glad I got the 4gb. Yea, I had a 465 up until june/july and it ran games really damn well at 720p on mostly higher setting without AA, and high end shadows. Wasnt until Black Flag where I felt I really had to upgrade my card to make a game playable.

as funny as it sounds, Battlefield 4 seems like the only recent game that looks amazing and actually seems to benefit from ridiculously expensive hardware.

i shouldn't have to, like, buy another r9 290, or scrap my recently upgraded system just to play these games ported from $400 console hardware.

i wonder how this bodes for Far Cry 4; granted FC3 was a pretty decent port...

Far cry 4 is also coming to last gen so those minimum specs are going to be pretty damn low

Also who wants to bet the specs required for witcher 3 will be reasonable as fuck and it will probably look better?

They are. Pretty sure they said the unoptimized version needs a 680 to run on high.

Theres two options here.

- This is just a shitty Ubisoft port like always and it will run like shit and look like shit...

- Ubisoft is actually making a game with next gen graphics and for the first time taking advantage of superior PC hardware, its what everyone wants right? If you cant catch up with insanly expensive PC hardware then consoles are for you my friend. Its true the game hase a very slim window for graphics adjustment sure but i take that anyday instead of a game that looks like low end console graphics.

Suprised at how low the CPU and RAM requirements are, i've had those for years, but the gfx card ones are pretty damn high, hopefully it just means turning down texture resolution to high and reducing the shadows lets it run well but you never know, Ubisoft's PC ports are totally random in quality these days.

CPU requirements are always bullshit as the CPU is a non factor in PC gaming. I suspect Intel/AMD pays to get the CPU in the recommendations even though a 10year old mainstream CPU would do fine in every game. It has always been like this.

Also who wants to bet the specs required for witcher 3 will be reasonable as fuck and it will probably look better?

They are. Pretty sure they said the unoptimized version needs a 680 to run on high.

If Witcher 2 is anyhing to go after Witcher 3 will not be a well optimized game but CDPR have more experience and money backing them up this time.

Wow. Why is everyone automatically jumping to the conclusion that these specs are unrealistic. I know this is kind of a douche thing to say, but check out any PC gaming related posts on this site from a year ago. I always jumped in and said, that anybody buying a PC this year is wasting their money because specs jump up massivly the year AFTER a new console release. This is not a new thing and these kind of spec jumps have been happening on EVERY console release since 96'. I mentioned that if you do buy a video card, you need a minimum of 4gb of Vram then everyone said I was crazy and that 2gb is all you need to run current games (not thinking 6months ahead is a stupid idea when building a PC). Then I mentioned that it would be wise to pick up a core i7 instead of the i5 because games are going to need more CPU than last gen and the hyper-threading will be more important (you know, since all consoles have an 8-core CPU it makes sense than any console port would run better with an 8-core CPU), it turns out I was right. Or how about all the people that contradicted my advice that 8gb of ram should be considered the minimum for any build?Also, it is not like these hardware examples have not been talked about in the past. Remember that Unreal Engine 4 demo where it featured the grizzled cyborg detective fighting robots. When the debuted that demo, Epic was talking about how that needed a GTX 680 to run. Since Unreal Engine 4 was designed around GTX 680 as a baseline, it only stands to reason that it should be considered the minimum spec for any PC gaming in the future.

The fact that a lot of gamers refuse to realize is that this is not a case of poor optimization, this is just the spec to run things properly. Think about it, the PS4 has system specs that are not far off of what the minimum requirements are. Then you realize that it is a console and developers can always make a game run more smoothly on a console because they are allowed to code right to the metal and do not have to worry about a variety of hardware (think about it, every CPU generation has a different instruction set and machine code that affects games differently [ie... 1011001 means something different on every CPU]). PC gaming will always have at least twice the minimum hardware requirements that a console game will. And no matter how many casual or new PC gamers there are that say otherwise, this will always be the case.

An example of what I am saying, look back and read the forum about PC performance of Assasins Creed 4. People were complaining about how the PS4 version was so much better than the PC version. Despite the fact that the PS4 version was the equivalent to running it on Low settings at 1080p 30fps, people were complaining about not being able to get 1080p 60fps with the graphics turned on high. Ppl were not willing to lower the graphical options because there is some weird idea that not turning on the graphics all the way was somehow preventing them from experiencing the game how it was meant to be played. Apparently, not having the hardware to max out a game and being forced to choose a medium or low graphical setting is all it takes for a game to be considered a bad port.

Also, if you are ever having a bad framerate in a PC game the first things you should do are:

- turn down Textures... the difference between ultra textures and high is almost always just the level of compression. You will almsot never actually see a difference between the two, the ultra textures are almost always t here for the people who buy $1000 video cards (because why not support them, if you spend that kind of money on a video card you are more likely to spend the money on a game that supports the video card)

- Turn down SSAO... There is not much that can hurt performance more than global illumination. Turning off SSAO will usually increase performance by more than 50%.

- The higher the resolution the more VRAM you need, if you have a 1440p monitor and a 2gb video card, you need to reduce your screen resolution. There is no debate on this fact. In most instances, if you are seeing stuttering, you should lower the resolution, this will increase the minimum FPS which is the stat that determines how smooth a game plays.

- Learn to limit frame rates. A steady 30fps will ALWAYS appear smoother than a game that is a variable 40fps, unless you have a GSYNC monitor (totally worth the money). Even if the game never dips below 30fps, a variable frame-rate will appear choppier than a steady frame rate.

Dunno why people are so surprised at high VRAM requirements. The next-gen consoles have 8GB of memory for both CPU and GPU access. What did you expect to happen when these games were brought over to PC?

CPU requirements are always bullshit as the CPU is a non factor in PC gaming. I suspect Intel/AMD pays to get the CPU in the recommendations even though a 10year old mainstream CPU would do fine in every game. It has always been like this.

How is a CPU a "non-factor"? You think all games are GPU-bound? Also good luck running modern games on a single-core Intel Pentium 4.

CPU requirements are always bullshit as the CPU is a non factor in PC gaming. I suspect Intel/AMD pays to get the CPU in the recommendations even though a 10year old mainstream CPU would do fine in every game. It has always been like this.

How is a CPU a "non-factor"? You think all games are GPU-bound? Also good luck running modern games on a single-core Intel Pentium 4.

In fact AC has always been heavily CPU bound. I've got a machine with SLI GTX680's hooked up to my TV for couch gaming and AC4 still stutters here and there with the visuals cranked up high, however the GPU usage never reaches 100%. That usually pretty indicative that there is either a memory or CPU limitation at play. Considering how heavy the crowd AI stuff is in those games it probably bogs down how quickly the rendering threads can feed the GPU. Assassin's Creed would probably be a prime candidate for DX12 or Mantle.

@kung_fu_viking: no problem

Welp!!! Now I'm scared of the Far Cry 4 specs...

It's the same engine as Far Cry 3 so its a last gen engine technically. Expect similar specs to Far Cry 3, Unity was built from the ground up for next-gen.

I find it hilarious how many people actually think these specs are anything other than pure BS. There is no way those recommended specs are even close to what is needed to play the game on max. I will be shocked if the game even uses full quad core tech (black flag doesn't) but it says you need an i7? Give me a break even the bulls are offended by this odor.

Please Log In to post.

This edit will also create new pages on Giant Bomb for:

Beware, you are proposing to add brand new pages to the wiki along with your edits. Make sure this is what you intended. This will likely increase the time it takes for your changes to go live.Comment and Save

Until you earn 1000 points all your submissions need to be vetted by other Giant Bomb users. This process takes no more than a few hours and we'll send you an email once approved.

Log in to comment