it's been a long time since I heard up on it, but IIRC, the widespread xbox failures were due to cutting R&D/QA short on the chips to get the xbox out there. I guess I'm not entirely sure what you mean by 'off the shelf'. I'm fairly sure both companies fab their own chips, it's just a matter how far they deviate from the designs supplied by whomever.

"Off the shelf" meaning designed for general purpose, or slightly modified. The benefits are keeping R&D costs down, plus ease of use for developers who are already familiar with an existing architecture.

The PS2 for example had the Emotion Engine and Graphics Synthesizer chips designed in-house by Sony engineers from the ground up, while the Xbox one used a 733MHz Intel Celeron processor, possibly with a bit more on-chip cache. With this last generation, 360s and PS3 both used IBM PowerPC processors. While the 360 pushed the envelope at the time with a triple core processor when most desktops were running dual core, it was still running on a fairly well understood PowerPC foundation. Sony used a single PowerPC core as the root of the Cell, but then designed an architecture around it focusing on the 7 SPE co-processors in conjunction with IBM. Beyond the base PowerPC core, Sony did most of the heavy design lifting and it ultimately resulted in a challenging chip to processor for (historically). Although they touted the Cell as being a viable option to put into televisions, computers, and food processors, ultimately it pretty much only ever was included in the PS3. (So not "off the shelf.")

While there are real benefits to using off-the-shelf technology, the major downsides would be having components that aren't optimized for (in this case) your console, and a rapid adoption and plateauing for developers getting the most out of your chip. I've heard it said that Sony intentionally made the Cell challenging so there would be a slow gradual curve of improvement over the life of a system in order to keep people excited about progressively newer and better-looking games.

In any case, the biggest criticism leveled against using off-the-shelf components is a psychological one. People see that and wonder what the point is of buying that console, if it's just more of something that already exists somewhere else. That's been a big criticism of the Ouya, which is not only using a standard Tegra3 chip, but also pretty much just a repackaged android tablet with a different UI and bundled bluetooth controller. When the first Xbox came out, it was effectively just a compact PC with almost the exact same components you'd find in a PC with an Intel processor and an nVidia video card. The criticism is, "why should I get something that I already own or can get with better specs?"

Conventional wisdom dictates that something custom built for a purpose is always going to be better, even if a good case can be made for the opposite. Times have changed though, and we've come to the point where the vast majority of games are completely multiplatform, put out on damn near anything that will run it. The more off-the-beaten path an architecture is, then, the more likely it will miss out on something when publisher accountants crunch the numbers of what it would cost to massage the code onto yet another platform vs potential revenue. That's why you saw a ton of PC/360 releases this last cycle where PS3 got poorer ports, delayed ports, or were left out entirely.

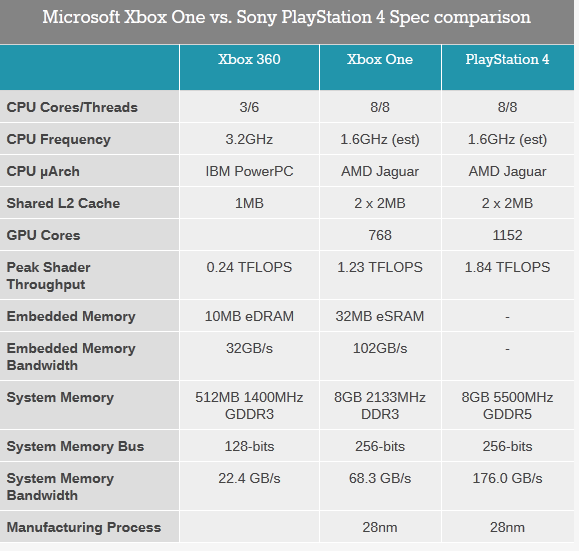

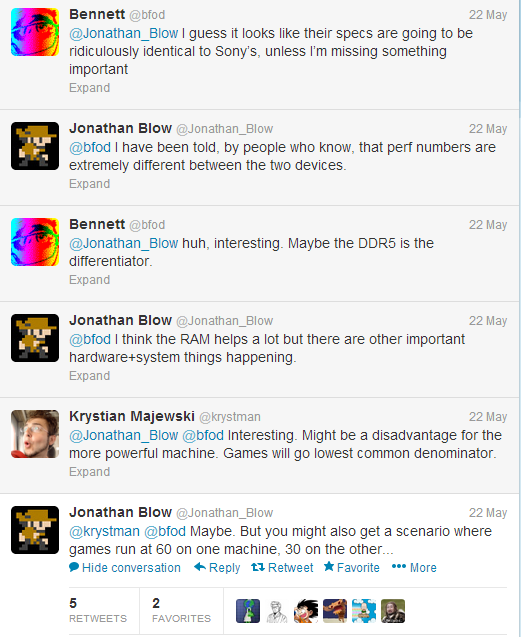

It's a bit of a moot distinction this time as this generation both consoles are going to be using almost the same components, but Sony is allowing theirs to be slightly more conventional, using faster RAM instead of putting the onus on the developers to juggle slower ram with the faster 32mb embedded cache. It's not the developmental gulf of the last generation, presumably, but that coupled with the reported difficulty working with Microsoft these days will probably lead to PS4 being lead SKU on most games this time.

Log in to comment