The People's Game of the Decade

By DevourerOfTime 12 Comments

Sometimes you sit down and you have an idea and it's a stupid thing to follow through with it, but you do it anyway and you can't explain why. Pretty sure Jeremy Medina knows that feeling all too well…

The thought I got was when I read Metacritic's Best of the 2010's feature, showcasing not just how broken their own system is and how tastes have rapidly changed in the past ten years, but also a list of publications decade top ten lists. There was a “ranking” of the games based on how many times each game was mentioned in those lists, but…

I don't like it.

Don’t get me wrong, the list probably had what the popular opinion would be the top 20 games of the last decade, but it was so… sterile… so… safe. What about the indie darlings and the cult classics? What about the mobile hits and the niche simulation games with arcane mechanics?

No, this wouldn’t do. I’d make my own list, using my own algorithm, using my own sources. And I’d bring the people’s voices into it too! Not through manipulatable user review scores, but from their own voice on the topic: through forums, blogs, youtube videos, and the lists on this fair site. But before I started, to keep it impartial, I needed to figure out how to do it, I’d need to figure out

THE ALGORITHM

Like all ranking systems, I needed a fair and accurate weighting system that could be used to judge the data. Or, more accurately, a weighting system that my own biases and values deemed as fair and accurate to judge the data. And I needed one that worked across four major scenarios:

- Top 10 lists with each rank having a different weight.

- Unranked lists still giving points to each game that were relevant

- Large lists like Top 100’s not having too much influence

- Lists smaller than 10 still having influence

A fair and accurate system… a fair and accurate system… a system that shows a game fairly and accurately… a system that fairly and accurately shows a game’s worth… a system that fairly and accurately shows a game’s skill…

No, no, no. That wouldn’t work. Unless…

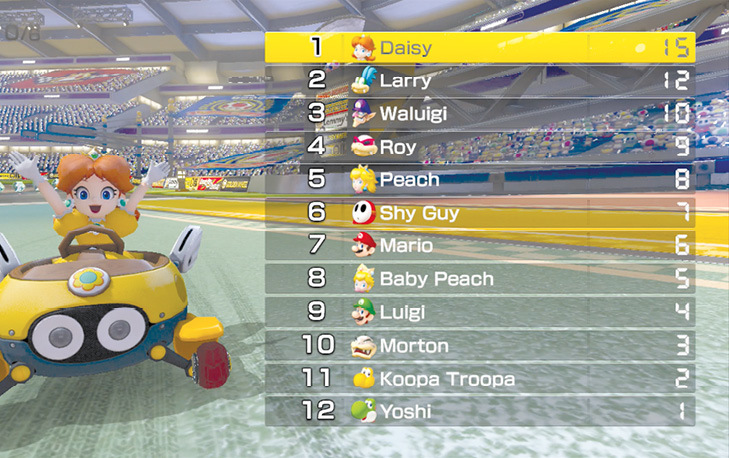

No that makes no sense at all. There’s no way to make Mario Party systems work with this data.

But what if I...

Yes… Yes of course! Mario Kart has been balancing a system of doling out points for 8 and 12 player races for decades. It’s a well tested formula used to give first place a competitive edge, but not give each position behind it an insurmountable climb to first. Mario Kart 8 even came out this decade, so it all fits! So the point values will be as follows:

Any game that is positioned below 12 gets the same points as twelve (basically a pity point for showing up). This should give:

- First place a distinct advantage (20% of top 10)

- Top 10’s get the bulk of the potential points (96% of top 12).

- Top 100’s having more influence than top 10 (91 more points), but they’re spread out over 90 titles.

- Any number below 10 get’s a fair percentage (ex. Top 3 = 47%, top 5 = 69%)

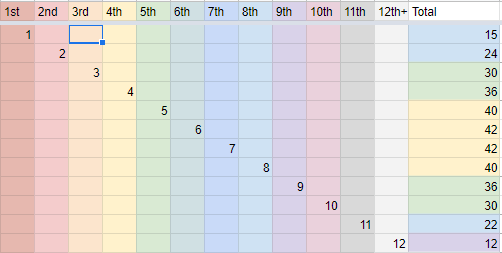

Okay looking good for ranked lists, but what about unranked lists? Well, my first idea was to just give an unranked list of x games points in the x ranking. And you know what? It actually surprisingly turned out looking pretty good? Like here is the point totals for unranked lists:

It might look unfair that to get the most total points you need 6 or 7 entries, but, just like how top 100’s lose their influence by spreading too thin, the more games in your list, the less influence you had on the position of any one game. It ended up being a pretty good compromise of giving unranked lists sway, but still not having to play a guessing game on what goes above what or just ruling them out altogether.

(also, if unranked lists had some pretty clear ranking (“definitely in my top 3 of the decade”, “this is the game of the decade and the rest are runner-ups”), I’d put those games still in the position that made the most sense).

The algorithm is set, now I just need to establish some ground rules before getting the data.

THE RULES

First off: Ranking order. This is a scientific list using science and numbers. So there needs rules on which numbers are better than others, so we can scientifically say x game is better than the other.

Also if I just go by how many points each gets there will be way too many ties and there has to be a tiebreaker system. (Seriously, looking at the final data, there would be like 53 games in ties in the end and nobody wants that.)

Thus the games are ranked in this order:

- Total number of points across all lists

- The highest position a game got on anyone’s list

- The number of lists that placed it at that highest position

- If all of the above are equal, the games tie.

(In the end this resulted in five two-way ties, which I can live with.)

THE LISTS

This is where this idea went from "stupid thing I can't get out of my head" to "thing I've worked on way too much over the past two weeks". Luckily I find plugging numbers into spreadsheets an oddly soothing exercise, so going a little overboard isn't too big of deal.

I’ve been collecting lists from websites, youtube videos, blogs, forums (actually, just here and discourse.zone), podcasts, and giant bomb user lists. I wanted to get about equal between “players” and “professional” lists, but this does skew more towards the “player” side, mostly because there was way more data and a lot of them were shorter (the forum posts are mostly just choices for #1). Looking at the end result though, it seems that didn't have too big of an effect on the end result (sorry Giant Bomb community, I know you wanted Sleeping Dogs in that Top 10).

I did however, allow myself to veto some lists due to a few factors:

- If the list read too much like a “best selling list”. You’d be surprised how many of these were like this out on “professional” sites.

- Lists that were focused on one platform or publisher. I mostly used them still, just giving everything 1 point instead of a ranking.

- Lists from sources that were hateful or malicious.

- Lists I didn’t respect. This was a low bar to clear, but some still couldn’t clear it. Sorry not sorry, but Angry Birds and its many spinoffs were not the best games of the last ten years. It wasn’t even the best game of the ten years before that when it was a flash game.

That all being said, if I missed your list, you, the one reading this, know that I probably just missed it. I am human, after all.

#BuildingTheList

And after around 60 professional sources, over 100 Giant Bomb user lists, and 30 miscellaneous lists added on top, the list is built and ready to go.

I just need to finish, you know, writing it.

With 703 games between everyone's lists, I couldn't have write-ups ready for each of them. So I'm going to be putting up the 100-51 just as a list right now, right here, then edit and finish it once it's done. Probably by the end of tomorrow.

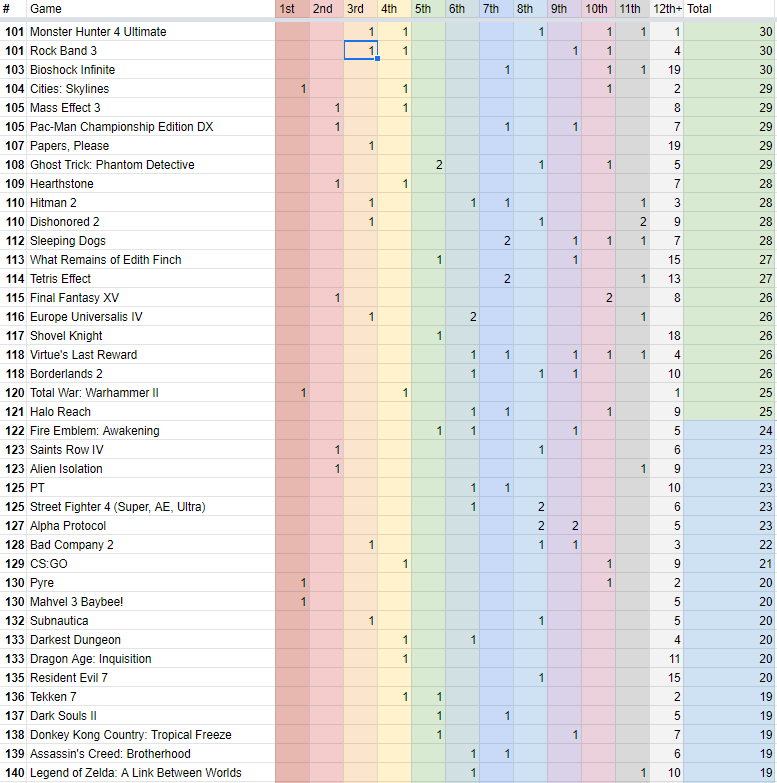

Until then, enjoy this screenshot of the 40 games that just missed getting into the top 100. Gives you a good look at how the spreadsheet worked as well (I can send the google docs link to it after this is all done if someone requests it if you want to look at my sources or something).

After ranking 703 games, I have compiled the list, including quotes from industry professionals, game reviewers, youtubers, and users of this very site. Enjoy.

https://www.giantbomb.com/profile/devoureroftime/lists/the-peoples-game-of-the-decade/367782/

Here are the forty runner-ups: